The family‑office principle – powered by AI

Another five years later, in spring 2033, Mainheide near Frankfurt.

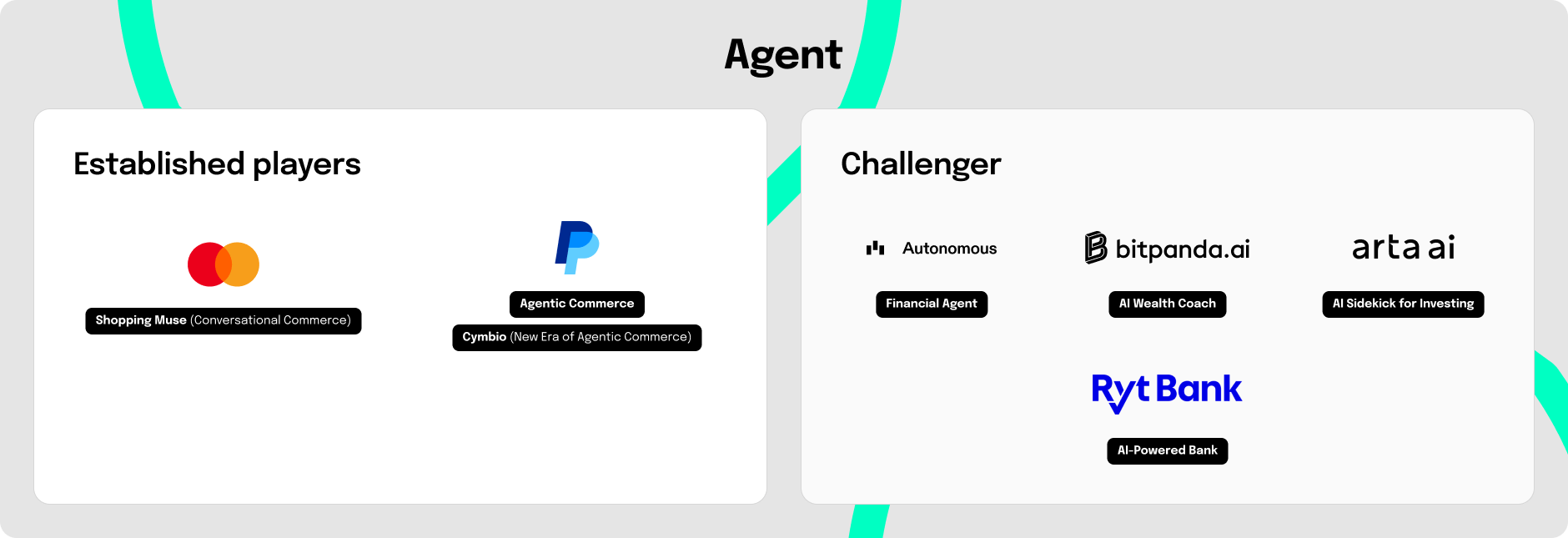

Emma is 35 years old and now owns her home. She rarely opens banking apps, not out of disinterest, but because much of it runs in the background. She regularly maintains her rules: limits, categories, blocklists, approval thresholds, and exceptions. Within these guardrails, a system of specialized agents handles routines like bills, savings contributions, subscription optimization, payments and, when needed, portfolio adjustments, including fraud prevention, detection, investigation, and loss compensation.

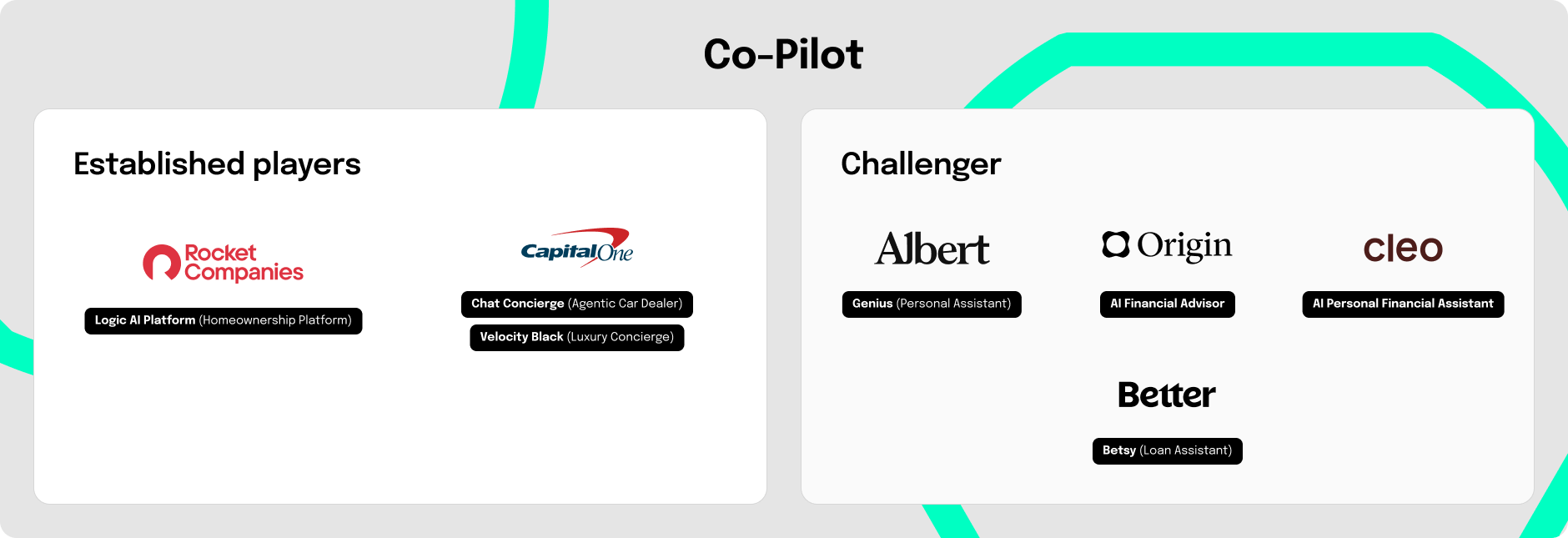

We call this third stage Agent because the AI doesn’t just explain or prepare; it acts: independently, within the rule set, with logs and clear approvals. Emma’s financial agent orchestrates multiple AI agents in distinct roles: cashflow/liquidity, payments, contracts, investments, or fraud.

The logic resembles a classic family office for very wealthy households, from the era before AI was used there. In the future, you won’t have to be very wealthy to experience truly personalized financial support in action: these roles will be performed not by people, but by AI agents in retail banking.

Emma’s agent is omnipresent: via voice at home, via messenger on the go, or on the desktop. A finance cockpit provides overview with drill‑down. And because the agent monitors continuously, it reaches out proactively when something looks off, and prioritizes so that the important things don’t get lost.

For the financial agent to truly reduce effort, the Co‑Pilot setup needs an additional agent layer: policies with limits and approval thresholds for autonomous decision and execution, signable actions, explainable logs, security/fraud handling including dispute/recovery, plus monitoring and a kill switch, so everything remains consistent.